Rag With Nims

Haystack RAG Pipeline with Self-Deployed AI models using NVIDIA NIMs

In this notebook, we will build a Haystack Retrieval Augmented Generation (RAG) Pipeline using self-hosted AI models with NVIDIA Inference Microservices or NIMs.

The notebook is associated with a technical blog demonstrating the steps to deploy NVIDIA NIMs with Haystack into production.

The code examples expect the LLM Generator and Retrieval Embedding AI models already deployed using NIMs microservices following the procedure described in the technical blog.

You can also substitute the calls to NVIDIA NIMs with the same AI models hosted by NVIDIA on ai.nvidia.com.

For the Haystack RAG pipeline, we will use the Qdrant Vector Database and the self-hosted meta-llama3-8b-instruct for the LLM Generator and NV-Embed-QA for the embedder.

In the next cell, We will set the domain names and URLs of the self-deployed NVIDIA NIMs as well as the QdrantDocumentStore URL. Adjust these according to your setup.

1. Check Deployments

Let's first check the Vector database and the self-deployed models with NVIDIA NIMs in our environment. Have a look at the technical blog for the steps NIM deployment.

We can check the AI models deployed with NIMs and the Qdrant database using simple curl commands.

1.1 Check the LLM Generator NIM

{"object":"list","data":[{"id":"meta-llama3-8b-instruct","object":"model","created":1716465695,"owned_by":"system","root":"meta-llama3-8b-instruct","parent":null,"permission":[{"id":"modelperm-6f996d60554743beab7b476f09356c6e","object":"model_permission","created":1716465695,"allow_create_engine":false,"allow_sampling":true,"allow_logprobs":true,"allow_search_indices":false,"allow_view":true,"allow_fine_tuning":false,"organization":"*","group":null,"is_blocking":false}]}]} 1.2 Check the Retreival Embedding NIM

{"object":"list","data":[{"id":"NV-Embed-QA","created":0,"object":"model","owned_by":"organization-owner"}]} 1.3 Check the Qdrant Database

{"title":"qdrant - vector search engine","version":"1.9.1","commit":"97c107f21b8dbd1cb7190ecc732ff38f7cdd248f"} 2. Perform Indexing

Let's first initialize the Qdrant vector database, create the Haystack indexing pipeline and upload pdf examples. We will use the self-deployed embedder AI model with NIM.

<haystack.core.pipeline.pipeline.Pipeline object at 0x7f267972b340> ,🚅 Components , - converter: PyPDFToDocument , - cleaner: DocumentCleaner , - splitter: DocumentSplitter , - embedder: NvidiaDocumentEmbedder , - writer: DocumentWriter ,🛤️ Connections , - converter.documents -> cleaner.documents (List[Document]) , - cleaner.documents -> splitter.documents (List[Document]) , - splitter.documents -> embedder.documents (List[Document]) , - embedder.documents -> writer.documents (List[Document])

We will upload in the vector database a PDF research paper about ChipNeMo from NVIDIA, a domain specific LLM for Chip design. The paper is available here.

Calculating embeddings: 100%|██████████| 4/4 [00:00<00:00, 15.85it/s] 200it [00:00, 1293.90it/s]

{'embedder': {'meta': {'usage': {'prompt_tokens': 0, 'total_tokens': 0}}},

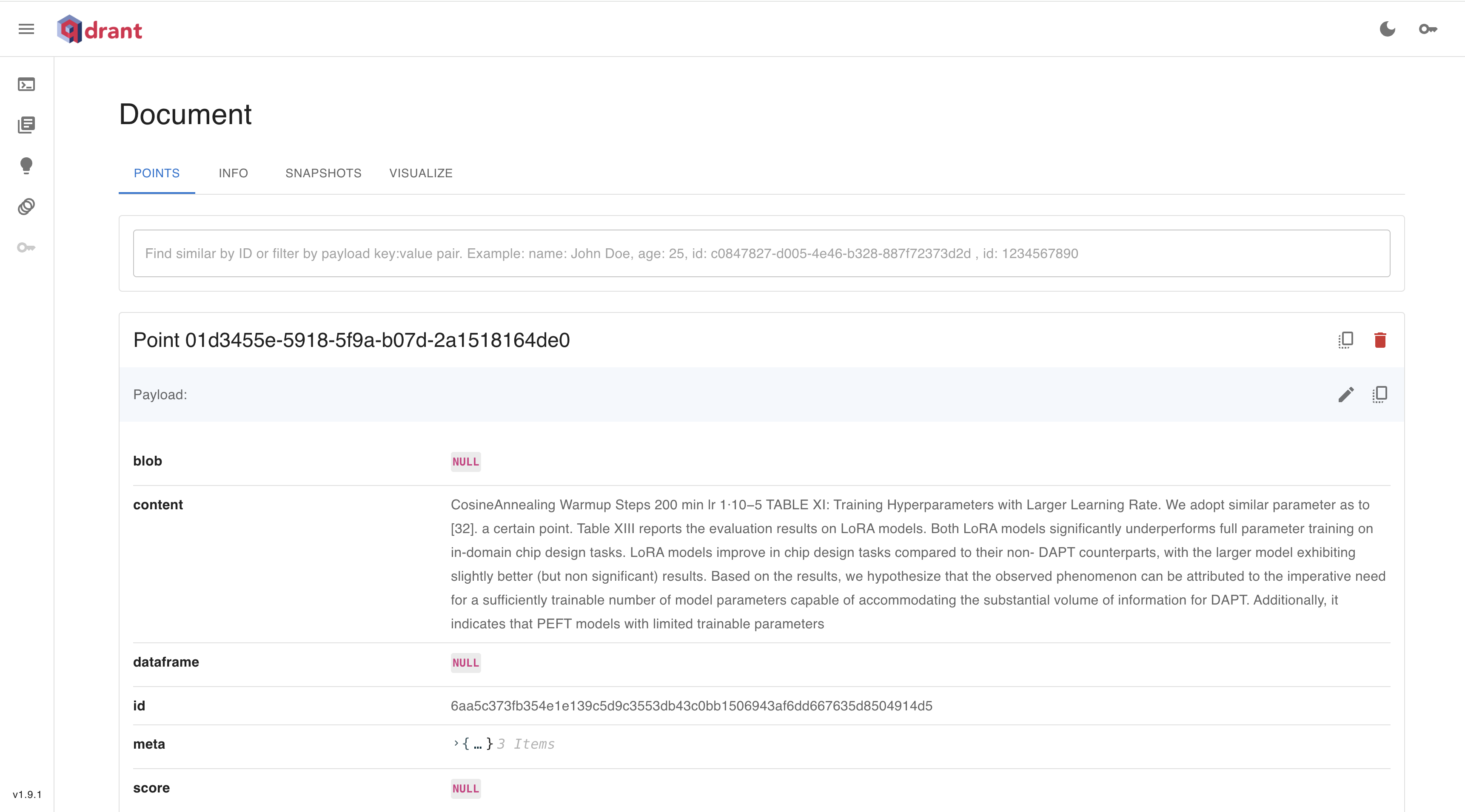

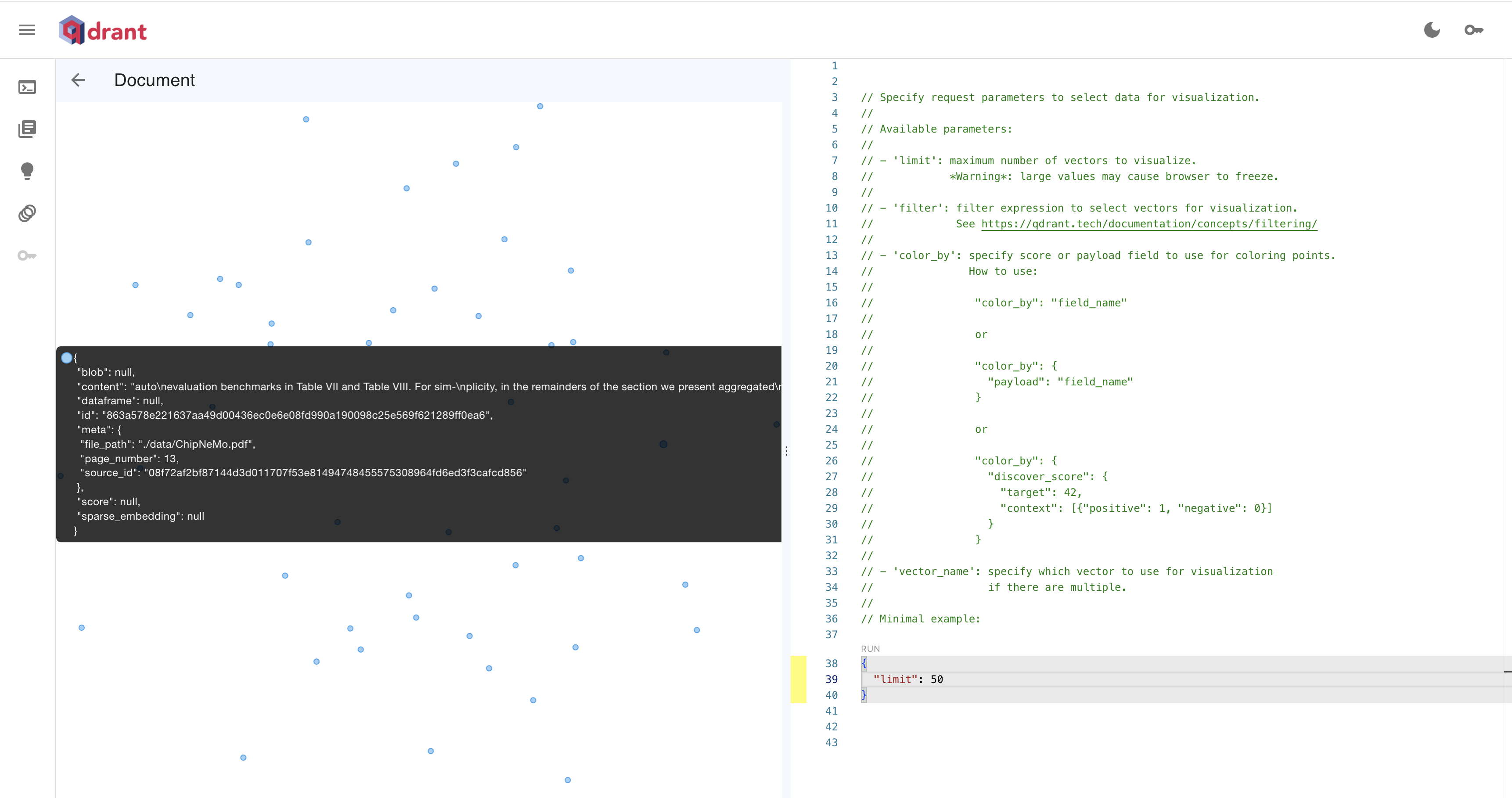

, 'writer': {'documents_written': 108}} It is possible to check the Qdrant database deployments through the Web UI. We can check the embeddings stored on the dashboard available qdrant_endpoint/dashboard

3. Create the RAG Pipeline

Let's now create the Haystack RAG pipeline. We will initialize the LLM generator with the self-deployed LLM with NIM.

<haystack.core.pipeline.pipeline.Pipeline object at 0x7f267956e130> ,🚅 Components , - embedder: NvidiaTextEmbedder , - retriever: QdrantEmbeddingRetriever , - prompt: PromptBuilder , - generator: NvidiaGenerator ,🛤️ Connections , - embedder.embedding -> retriever.query_embedding (List[float]) , - retriever.documents -> prompt.documents (List[Document]) , - prompt.prompt -> generator.prompt (str)

Let's now request the RAG pipeline asking a question about the ChipNemo model.

ChipNeMo is a domain-adapted large language model (LLM) designed for chip design, which aims to explore the applications of LLMs for industrial chip design. It is developed by reusing public training data from other language models, with the intention of preserving general knowledge and natural language capabilities during domain adaptation. The model is trained using a combination of natural language and code datasets, including Wikipedia data and GitHub data, and is evaluated on various benchmarks, including multiple-choice questions, code generation, and human evaluation. ChipNeMo implements multiple domain adaptation techniques, including pre-training, domain adaptation, and fine-tuning, to adapt the LLM to the chip design domain. The model is capable of understanding internal HW designs and explaining complex design topics, generating EDA scripts, and summarizing and analyzing bugs.

This notebook shows how to build a Haystack RAG pipeline using self-deployed generative AI models with NVIDIA Inference Microservices (NIMs).

Please check the documentation on how to deploy NVIDIA NIMs in your own environment.

For experimentation purpose, it is also possible to substitute the self-deployed models with NIMs hosted by NVIDIA at ai.nvidia.com.