Integration Haystack

Observability for Haystack

This cookbook demonstrates how to use Langfuse to gain real-time observability for your Haystack Application.

What is Haystack? Haystack is the open-source Python framework developed by deepset. Its modular design allows users to implement custom pipelines to build production-ready LLM applications, like retrieval-augmented generative pipelines and state-of-the-art search systems. It integrates with Hugging Face Transformers, Elasticsearch, OpenSearch, OpenAI, Cohere, Anthropic and others, making it an extremely popular framework for teams of all sizes.

What is Langfuse? Langfuse is the open source LLM engineering platform. It helps teams to collaboratively manage prompts, trace applications, debug problems, and evaluate their LLM system in production.

Get Started

We'll walk through a simple example of using Haystack and integrating it with Langfuse.

Step 1: Install Dependencies

Step 2: Set Up Environment Variables

Configure your Langfuse API keys. You can get them by signing up for Langfuse Cloud or self-hosting Langfuse.

With the environment variables set, we can now initialize the Langfuse client. get_client() initializes the Langfuse client using the credentials provided in the environment variables.

Step 3: Initialize Haystack Instrumentation

Now, we initialize the OpenInference Haystack instrumentation. This third-party instrumentation automatically captures Haystack operations and exports OpenTelemetry (OTel) spans to Langfuse.

Step 4: Create a Simple Haystack Application

Now we create a simple Haystack application using an OpenAI model and the SerperDev search API.

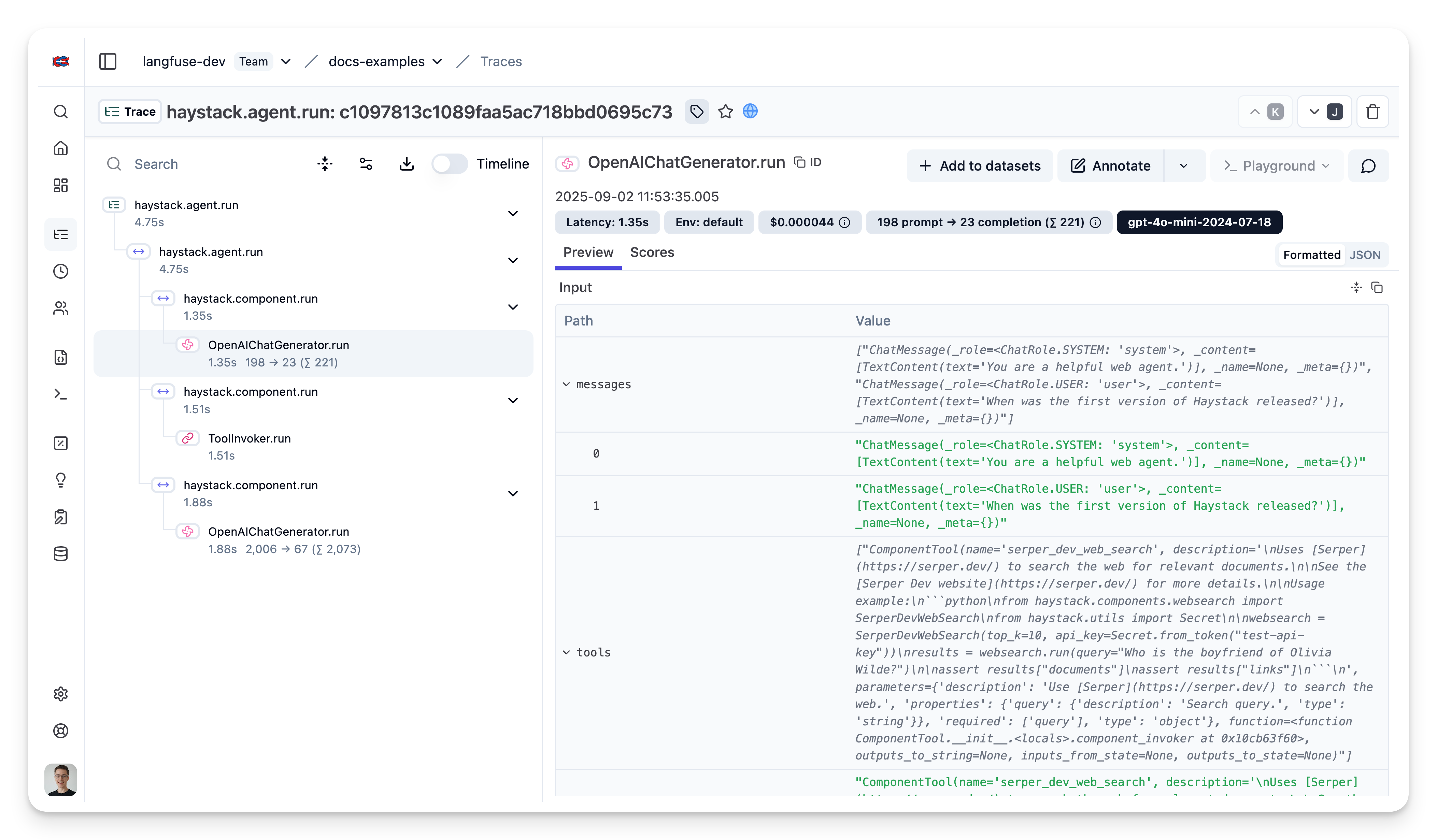

Step 5: View Traces in Langfuse

After running your workflow, log in to Langfuse to explore the generated traces. You will see logs for each workflow step along with metrics such as token counts, latencies, and execution paths.