Moderation Classifier

Moderation: Train your own moderation service

In this cookbook, we will explore classification for moderation using our Classifier Factory to build classifiers tailored to your specific needs and use cases.

To keep things straightforward, we will concentrate on a particular example that involves multilabel classification for content moderation.

Dataset

We will use a subset of the google/civil_comments dataset. This subset includes several labels that we will for multi-label classification, allowing us to obtain scores for each type of moderation.

Subset

Lets download and prepare the subset, we will install datasets and load it.

Train set length: 20607 Validation set length: 1010 Test set length: 1013

This will be the subset we will be training with. In an optimal scenario, you may need to do further curation and data preparation to balance the dataset and achieve better results. In this scenario, we have a fairly balanced dataset for toxicity and insult, but it is much less balanced for the rest of the labels.

Format Data

Now that we have loaded our dataset, we will convert it to the proper desired format to upload for training.

The data will be converted to a JSONL format as follows:

{"text": "I believe the Trump administration made a big mistake by its strict definition of family members allowed to visit the U.S. from the six Muslim-majority countries. It knew or should have known opponents would pounce on a narrow definition and, therefore, should have expanded it a bit to deflect challenges and have a better chance of overcoming inevitable appeals in court. Moreover, the relatives to be allowed in were not coming as permanent residents; they were coming as visitors for no longer than 6 months with a B1/B2 tourist visas. Why fall on your sword over that? Those coming to visit still had to be vetted by consular officials issuing tourist visas. It would have been easy for the State Department to quietly tighten or enhance the vetting process in the six countries to make it a bit more difficult for people to obtain visas, thus minimizing the number of people coming and make it a non-issue.", "labels": {"moderation": ["safe"]}}

{"text": "Great comment Jake..", "labels": {"moderation": ["safe"]}}

{"text": "Uh huh. Then why don't you behave that way in the ADN comments section? \nYour alleged \"c'est la vie\" attitude is nowhere to be found on this website. Where's your vaunted \"personal integrity\" here? You sound like Trump at his ridiculous and incredibly embarrassing - for him and his cabinet - \"Tell Me How Much You Worship Me\" fake cabinet meeting.", "labels": {"moderation": ["toxicity", "insult"]}}

{"text": "And given at least a two year probation, complete with random UAs and mandated counseling for liars.", "labels": {"moderation": ["toxicity", "insult"]}}

{"text": "You did make an accusation else there wouldn't be an investigation.", "labels": {"moderation": ["safe"]}}

...

With an example of a label being:

"labels": {

"moderation": [

"toxicity",

"insult"

]

}

For multi-label classification, we arbitrarily defined a new label "safe" to represent samples that were not flagged.

100%|██████████| 20607/20607 [00:00<00:00, 57140.72it/s] 100%|██████████| 1010/1010 [00:00<00:00, 54499.51it/s] 100%|██████████| 1013/1013 [00:00<00:00, 51337.93it/s]

The data was converted and saved properly. We can now train our model.

Training

There are two methods to train the model: either upload and train via la platforme or via the API.

First, we need to install mistralai.

And setup our client, you can create an API key here.

We will upload 2 files, the training set and the validation set ( optional ) that will be used for validation loss.

With the data uploaded, we can create a job.

{

"id": "cbe076ac-40a6-4fa4-9162-281531405d20",

"auto_start": false,

"model": "ministral-3b-latest",

"status": "QUEUED",

"created_at": 1744806685,

"modified_at": 1744806685,

"training_files": [

"d0a8d6ac-2d9c-4d43-af04-e40b244fbdb3"

],

"hyperparameters": {

"training_steps": 200,

"learning_rate": 5e-05,

"weight_decay": 0.1,

"warmup_fraction": 0.05,

"epochs": null,

"seq_len": 16384

},

"validation_files": [

"ea6c9ca7-470b-4953-92ab-4f216a967852"

],

"fine_tuned_model": null,

"suffix": null,

"integrations": [

{

"project": "moderation-classifier",

"name": null,

"run_name": null,

"url": null

}

],

"trained_tokens": null,

"metadata": {

"expected_duration_seconds": null,

"cost": 0.0,

"cost_currency": null,

"train_tokens_per_step": null,

"train_tokens": null,

"data_tokens": null,

"estimated_start_time": null

}

}

Once the job is created, we can review details such as the number of epochs and other relevant information. This allows us to make informed decisions before initiating the job.

We'll retrieve the job and wait for it to complete the validation process before starting. This validation step ensures the job is ready to begin.

{

"id": "cbe076ac-40a6-4fa4-9162-281531405d20",

"auto_start": false,

"model": "ministral-3b-latest",

"status": "VALIDATED",

"created_at": 1744806685,

"modified_at": 1744806687,

"training_files": [

"d0a8d6ac-2d9c-4d43-af04-e40b244fbdb3"

],

"hyperparameters": {

"training_steps": 200,

"learning_rate": 5e-05,

"weight_decay": 0.1,

"warmup_fraction": 0.05,

"epochs": 7.29886929671425,

"seq_len": 16384

},

"classifier_targets": [

{

"name": "moderation",

"labels": [

"toxicity",

"safe",

"identity_attack",

"sexual_explicit",

"obscene",

"insult",

"threat"

]

}

],

"validation_files": [

"ea6c9ca7-470b-4953-92ab-4f216a967852"

],

"fine_tuned_model": null,

"suffix": null,

"integrations": [

{

"project": "moderation-classifier",

"name": null,

"run_name": null,

"url": null

}

],

"trained_tokens": null,

"metadata": {

"expected_duration_seconds": 3400,

"cost": 6.56,

"cost_currency": "EUR",

"train_tokens_per_step": 65536,

"train_tokens": 13107200,

"data_tokens": 1795785,

"estimated_start_time": null

},

"events": [

{

"name": "status-updated",

"created_at": 1744806685,

"data": {

"status": "QUEUED"

}

},

{

"name": "status-updated",

"created_at": 1744806685,

"data": {

"status": "VALIDATING"

}

},

{

"name": "status-updated",

"created_at": 1744806687,

"data": {

"status": "VALIDATED"

}

}

],

"checkpoints": []

}

We can now run the job.

{

"id": "cbe076ac-40a6-4fa4-9162-281531405d20",

"auto_start": false,

"model": "ministral-3b-latest",

"status": "QUEUED",

"created_at": 1744806685,

"modified_at": 1744806690,

"training_files": [

"d0a8d6ac-2d9c-4d43-af04-e40b244fbdb3"

],

"hyperparameters": {

"training_steps": 200,

"learning_rate": 5e-05,

"weight_decay": 0.1,

"warmup_fraction": 0.05,

"epochs": 7.29886929671425,

"seq_len": 16384

},

"classifier_targets": [

{

"name": "moderation",

"labels": [

"toxicity",

"safe",

"identity_attack",

"sexual_explicit",

"obscene",

"insult",

"threat"

]

}

],

"validation_files": [

"ea6c9ca7-470b-4953-92ab-4f216a967852"

],

"fine_tuned_model": null,

"suffix": null,

"integrations": [

{

"project": "moderation-classifier",

"name": null,

"run_name": null,

"url": null

}

],

"trained_tokens": null,

"metadata": {

"expected_duration_seconds": 3400,

"cost": 6.56,

"cost_currency": "EUR",

"train_tokens_per_step": 65536,

"train_tokens": 13107200,

"data_tokens": 1795785,

"estimated_start_time": 1744806861

},

"events": [

{

"name": "status-updated",

"created_at": 1744806685,

"data": {

"status": "QUEUED"

}

},

{

"name": "status-updated",

"created_at": 1744806685,

"data": {

"status": "VALIDATING"

}

},

{

"name": "status-updated",

"created_at": 1744806687,

"data": {

"status": "VALIDATED"

}

}

],

"checkpoints": []

}

The job is now starting. Let's keep track of the status and plot the loss.

For that, we highly recommend making use of our Weights and Biases integration, but we will also keep track of it directly in this notebook.

WANDB

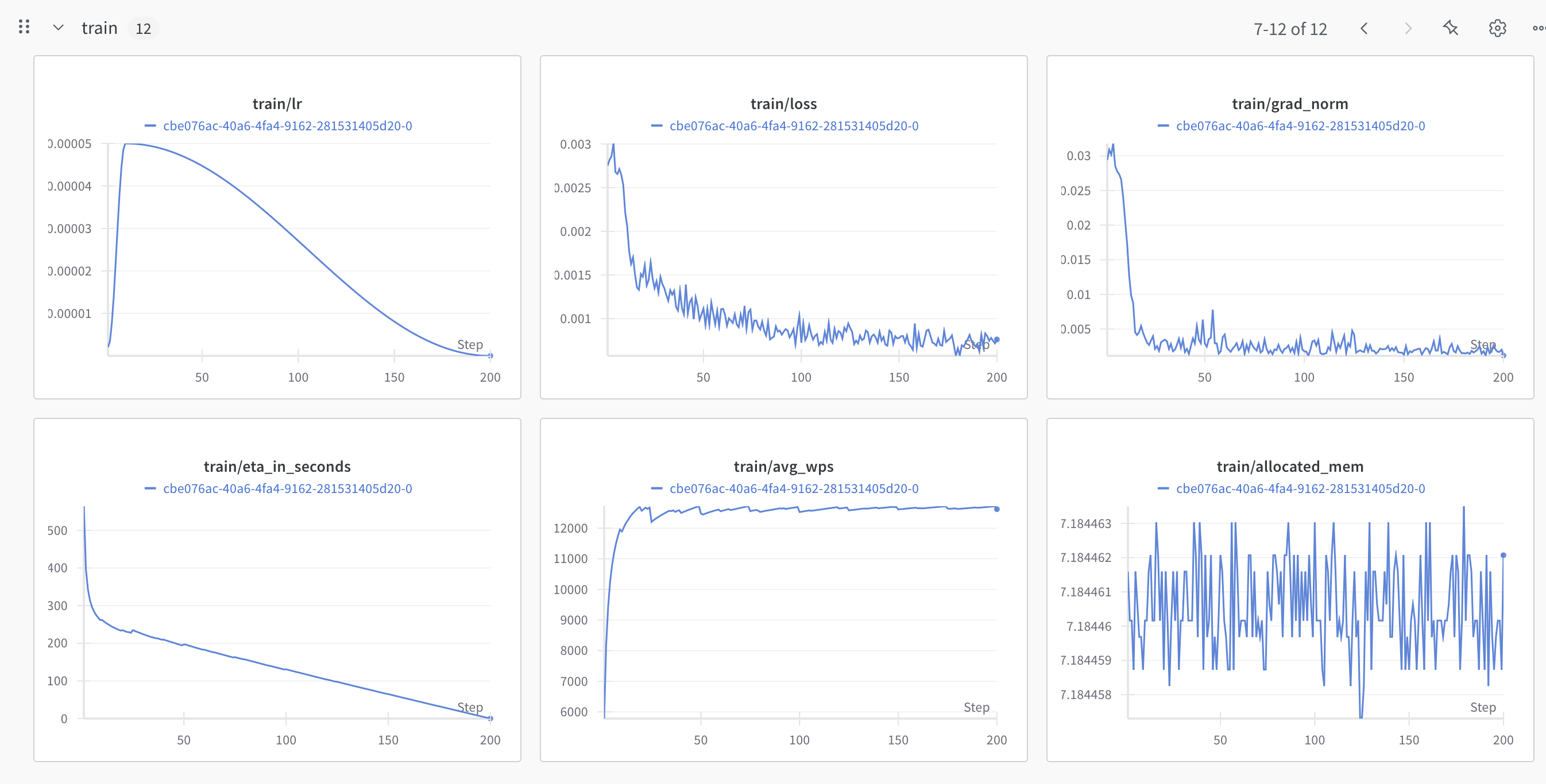

Training:

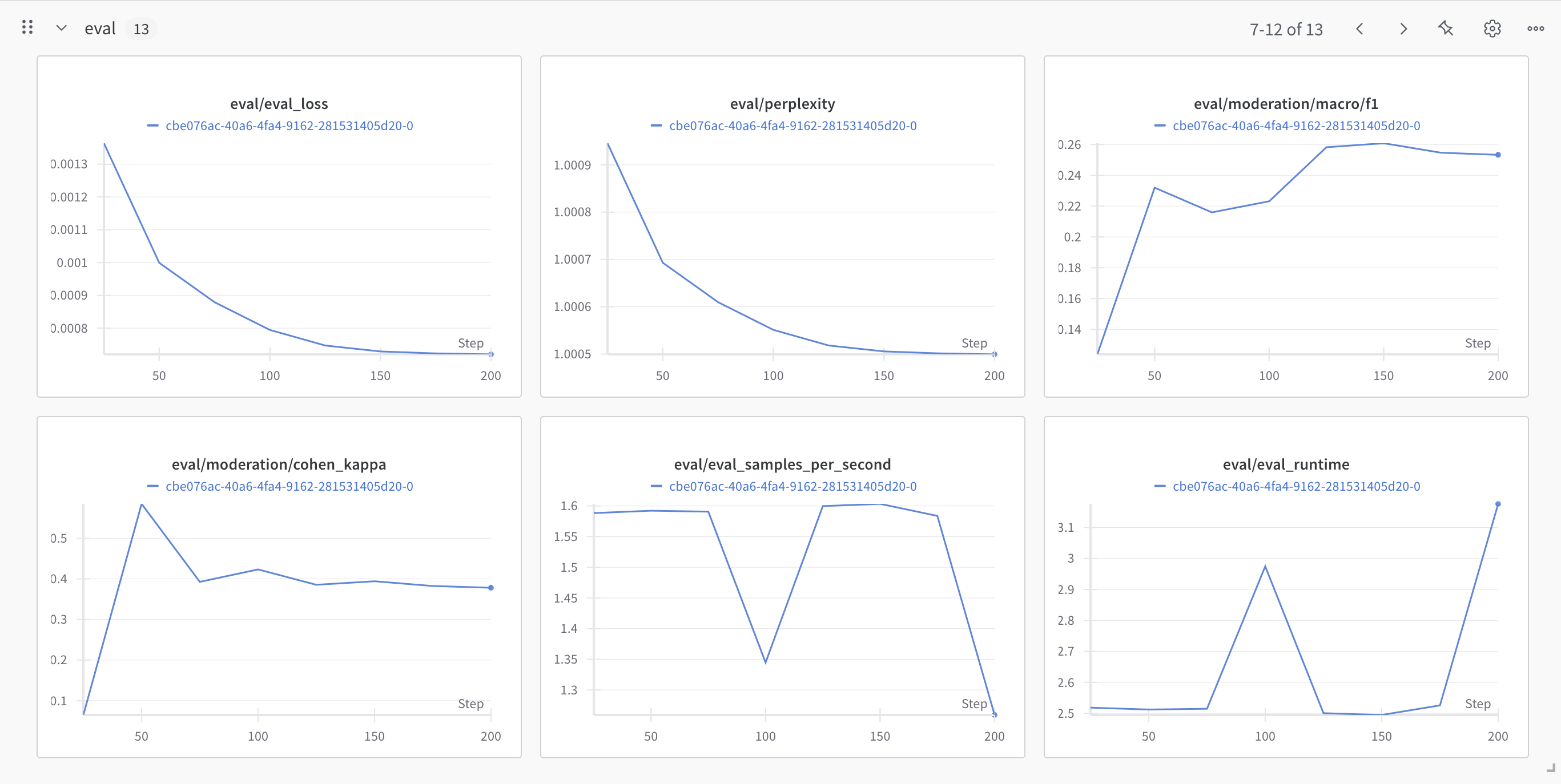

Eval/Validation:

SUCCESS

Inference

Our model is trained and ready for use! Let's test it on a sample from our test set!

Text: Flim flam artist Paul Ryan doing his stuff....

Classifier Response: {

"id": "9c3a76bc84204dcdadaf11e291365a3b",

"model": "ft:classifier:ministral-3b-latest:8e2706f0:20250416:cbe076ac",

"results": [

{

"moderation": {

"scores": {

"toxicity": 0.11157931387424469,

"safe": 0.7990996837615967,

"identity_attack": 0.0010769261280074716,

"sexual_explicit": 0.0030203138012439013,

"obscene": 0.008739566430449486,

"insult": 0.07260604947805405,

"threat": 0.0038781599141657352

}

}

}

]

}

For a more in-depth guide on multi-target, with an evaluation comparison between LLMs and our classifier API, visit this cookbook.