Llama3 (8B) Ollama

To run this, press "Runtime" and press "Run all" on a free Tesla T4 Google Colab instance!

To install Unsloth on your local device, follow our guide. This notebook is licensed LGPL-3.0.

You will learn how to do data prep, how to train, how to run the model, & how to save it

News

Train MoEs - DeepSeek, GLM, Qwen and gpt-oss 12x faster with 35% less VRAM. Blog

You can now train embedding models 1.8-3.3x faster with 20% less VRAM. Blog

Ultra Long-Context Reinforcement Learning is here with 7x more context windows! Blog

3x faster LLM training with 30% less VRAM and 500K context. 3x faster • 500K Context

New in Reinforcement Learning: FP8 RL • Vision RL • Standby • gpt-oss RL

Visit our docs for all our model uploads and notebooks.

Installation

Unsloth

🦥 Unsloth: Will patch your computer to enable 2x faster free finetuning. ==((====))== Unsloth 2024.9.post4: Fast Llama patching. Transformers = 4.44.2. \\ /| GPU: Tesla T4. Max memory: 14.748 GB. Platform = Linux. O^O/ \_/ \ Pytorch: 2.4.1+cu121. CUDA = 7.5. CUDA Toolkit = 12.1. \ / Bfloat16 = FALSE. FA [Xformers = 0.0.28.post1. FA2 = False] "-____-" Free Apache license: http://github.com/unslothai/unsloth Unsloth: Fast downloading is enabled - ignore downloading bars which are red colored!

model.safetensors: 0%| | 0.00/5.70G [00:00<?, ?B/s]

generation_config.json: 0%| | 0.00/198 [00:00<?, ?B/s]

tokenizer_config.json: 0%| | 0.00/50.6k [00:00<?, ?B/s]

tokenizer.json: 0%| | 0.00/9.09M [00:00<?, ?B/s]

special_tokens_map.json: 0%| | 0.00/350 [00:00<?, ?B/s]

We now add LoRA adapters so we only need to update 1 to 10% of all parameters!

Unsloth 2024.9.post4 patched 32 layers with 32 QKV layers, 32 O layers and 32 MLP layers.

Data Prep

We now use the Alpaca dataset from vicgalle, which is a version of 52K of the original Alpaca dataset generated from GPT4. You can replace this code section with your own data prep.

README.md: 0%| | 0.00/3.38k [00:00<?, ?B/s]

(…)-00000-of-00001-6ef3991c06080e14.parquet: 0%| | 0.00/48.4M [00:00<?, ?B/s]

Generating train split: 0%| | 0/52002 [00:00<?, ? examples/s]

['instruction', 'input', 'output', 'text']

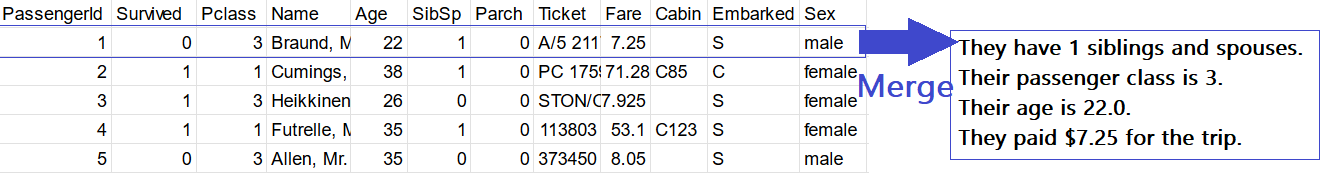

One issue is this dataset has multiple columns. For Ollama and llama.cpp to function like a custom ChatGPT Chatbot, we must only have 2 columns - an instruction and an output column.

['instruction', 'input', 'output', 'text']

To solve this, we shall do the following:

- Merge all columns into 1 instruction prompt.

- Remember LLMs are text predictors, so we can customize the instruction to anything we like!

- Use the

to_sharegptfunction to do this column merging process!

For example below in our Titanic CSV finetuning notebook, we merged multiple columns in 1 prompt:

To merge multiple columns into 1, use merged_prompt.

- Enclose all columns in curly braces

{}. - Optional text must be enclosed in

[[]]. For example if the column "Pclass" is empty, the merging function will not show the text and skp this. This is useful for datasets with missing values. - You can select every column, or a few!

- Select the output or target / prediction column in

output_column_name. For the Alpaca dataset, this will beoutput.

To make the finetune handle multiple turns (like in ChatGPT), we have to create a "fake" dataset with multiple turns - we use conversation_extension to randomly select some conversations from the dataset, and pack them together into 1 conversation.

Merging columns: 0%| | 0/52002 [00:00<?, ? examples/s]

Converting to ShareGPT: 0%| | 0/52002 [00:00<?, ? examples/s]

Flattening the indices: 0%| | 0/52002 [00:00<?, ? examples/s]

Flattening the indices: 0%| | 0/52002 [00:00<?, ? examples/s]

Flattening the indices: 0%| | 0/52002 [00:00<?, ? examples/s]

Extending conversations: 0%| | 0/52002 [00:00<?, ? examples/s]

Finally use standardize_sharegpt to fix up the dataset!

Standardizing format: 0%| | 0/52002 [00:00<?, ? examples/s]

Customizable Chat Templates

You also need to specify a chat template. Previously, you could use the Alpaca format as shown below.

Now, you have to use {INPUT} for the instruction and {OUTPUT} for the response.

We also allow you to use an optional {SYSTEM} field. This is useful for Ollama when you want to use a custom system prompt (also like in ChatGPT).

You can also not put a {SYSTEM} field, and just put plain text.

chat_template = """{SYSTEM}

USER: {INPUT}

ASSISTANT: {OUTPUT}"""

Use below if you want to use the Llama-3 prompt format. You must use the instruct and not the base model if you use this!

chat_template = """<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{SYSTEM}<|eot_id|><|start_header_id|>user<|end_header_id|>

{INPUT}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{OUTPUT}<|eot_id|>"""

For the ChatML format:

chat_template = """<|im_start|>system

{SYSTEM}<|im_end|>

<|im_start|>user

{INPUT}<|im_end|>

<|im_start|>assistant

{OUTPUT}<|im_end|>"""

The issue is the Alpaca format has 3 fields, whilst OpenAI style chatbots must only use 2 fields (instruction and response). That's why we used the to_sharegpt function to merge these columns into 1.

Unsloth: We automatically added an EOS token to stop endless generations.

Map: 0%| | 0/52002 [00:00<?, ? examples/s]

Map (num_proc=2): 0%| | 0/52002 [00:00<?, ? examples/s]

max_steps is given, it will override any value given in num_train_epochs

GPU = Tesla T4. Max memory = 14.748 GB. 5.613 GB of memory reserved.

==((====))== Unsloth - 2x faster free finetuning | Num GPUs = 1 \\ /| Num examples = 52,002 | Num Epochs = 1 O^O/ \_/ \ Batch size per device = 2 | Gradient Accumulation steps = 4 \ / Total batch size = 8 | Total steps = 60 "-____-" Number of trainable parameters = 41,943,040

910.2379 seconds used for training. 15.17 minutes used for training. Peak reserved memory = 8.361 GB. Peak reserved memory for training = 2.748 GB. Peak reserved memory % of max memory = 56.692 %. Peak reserved memory for training % of max memory = 18.633 %.

The attention mask is not set and cannot be inferred from input because pad token is same as eos token. As a consequence, you may observe unexpected behavior. Please pass your input's `attention_mask` to obtain reliable results.

The next number in the Fibonacci sequence is 13.<|end_of_text|>

Since we created an actual chatbot, you can also do longer conversations by manually adding alternating conversations between the user and assistant!

France's tallest tower is called the Eiffel Tower.<|end_of_text|>

('lora_model/tokenizer_config.json',

, 'lora_model/special_tokens_map.json',

, 'lora_model/tokenizer.json') Now if you want to load the LoRA adapters we just saved for inference, set False to True:

The sequence 1, 1, 2, 3, 5, 8 is a special sequence known as the Fibonacci sequence. The Fibonacci sequence is a series of numbers where each number is the sum of the two previous numbers, starting with 0 and 1. In this case, the sequence is 1, 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, 144, and so on. The Fibonacci sequence has many interesting properties and is widely studied in mathematics and computer science.<|end_of_text|>

You can also use Hugging Face's AutoPeftModelForCausalLM. Only use this if you do not have unsloth installed. It can be hopelessly slow, since 4bit model downloading is not supported, and Unsloth's inference is 2x faster.

>>> Installing ollama to /usr/local >>> Downloading Linux amd64 bundle ############################################################################################# 100.0% >>> Creating ollama user... >>> Adding ollama user to video group... >>> Adding current user to ollama group... >>> Creating ollama systemd service... WARNING: Unable to detect NVIDIA/AMD GPU. Install lspci or lshw to automatically detect and install GPU dependencies. >>> The Ollama API is now available at 127.0.0.1:11434. >>> Install complete. Run "ollama" from the command line.

Next, we shall save the model to GGUF / llama.cpp

We clone llama.cpp and we default save it to q8_0. We allow all methods like q4_k_m. Use save_pretrained_gguf for local saving and push_to_hub_gguf for uploading to HF.

Some supported quant methods (full list on our docs page):

q8_0- Fast conversion. High resource use, but generally acceptable.q4_k_m- Recommended. Uses Q6_K for half of the attention.wv and feed_forward.w2 tensors, else Q4_K.q5_k_m- Recommended. Uses Q6_K for half of the attention.wv and feed_forward.w2 tensors, else Q5_K.

We also support saving to multiple GGUF options in a list fashion! This can speed things up by 10 minutes or more if you want multiple export formats!

Unsloth: ##### The current model auto adds a BOS token. Unsloth: ##### Your chat template has a BOS token. We shall remove it temporarily. Unsloth: You have 1 CPUs. Using `safe_serialization` is 10x slower. We shall switch to Pytorch saving, which will take 3 minutes and not 30 minutes. To force `safe_serialization`, set it to `None` instead. Unsloth: Kaggle/Colab has limited disk space. We need to delete the downloaded model which will save 4-16GB of disk space, allowing you to save on Kaggle/Colab. Unsloth: Will remove a cached repo with size 5.7G

Unsloth: Merging 4bit and LoRA weights to 16bit... Unsloth: Will use up to 5.49 out of 12.67 RAM for saving.

47%|████▋ | 15/32 [00:01<00:01, 9.79it/s]We will save to Disk and not RAM now. 100%|██████████| 32/32 [01:41<00:00, 3.18s/it]

Unsloth: Saving tokenizer... Done. Unsloth: Saving model... This might take 5 minutes for Llama-7b... Unsloth: Saving model/pytorch_model-00001-of-00004.bin... Unsloth: Saving model/pytorch_model-00002-of-00004.bin... Unsloth: Saving model/pytorch_model-00003-of-00004.bin... Unsloth: Saving model/pytorch_model-00004-of-00004.bin... Done.

Unsloth: Converting llama model. Can use fast conversion = False.

==((====))== Unsloth: Conversion from QLoRA to GGUF information

\\ /| [0] Installing llama.cpp will take 3 minutes.

O^O/ \_/ \ [1] Converting HF to GGUF 16bits will take 3 minutes.

\ / [2] Converting GGUF 16bits to ['q8_0'] will take 10 minutes each.

"-____-" In total, you will have to wait at least 16 minutes.

Unsloth: [0] Installing llama.cpp. This will take 3 minutes...

Unsloth: [1] Converting model at model into q8_0 GGUF format.

The output location will be ./model/unsloth.Q8_0.gguf

This will take 3 minutes...

INFO:hf-to-gguf:Loading model: model

INFO:gguf.gguf_writer:gguf: This GGUF file is for Little Endian only

INFO:hf-to-gguf:Exporting model...

INFO:hf-to-gguf:gguf: loading model weight map from 'pytorch_model.bin.index.json'

INFO:hf-to-gguf:gguf: loading model part 'pytorch_model-00001-of-00004.bin'

INFO:hf-to-gguf:token_embd.weight, torch.float16 --> Q8_0, shape = {4096, 128256}

INFO:hf-to-gguf:blk.0.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.0.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.0.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.0.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.0.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.0.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.0.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.0.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.0.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.1.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.1.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.1.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.1.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.1.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.1.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.1.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.1.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.1.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.2.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.2.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.2.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.2.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.2.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.2.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.2.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.2.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.2.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.3.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.3.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.3.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.3.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.3.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.3.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.3.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.3.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.3.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.4.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.4.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.4.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.4.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.4.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.4.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.4.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.4.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.4.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.5.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.5.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.5.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.5.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.5.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.5.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.5.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.5.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.5.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.6.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.6.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.6.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.6.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.6.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.6.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.6.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.6.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.6.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.7.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.7.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.7.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.7.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.7.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.7.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.7.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.7.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.7.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.8.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.8.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.8.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.8.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.8.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.8.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.8.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.8.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.8.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:gguf: loading model part 'pytorch_model-00002-of-00004.bin'

INFO:hf-to-gguf:blk.9.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.9.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.9.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.9.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.9.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.9.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.9.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.9.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.9.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.10.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.10.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.10.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.10.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.10.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.10.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.10.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.10.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.10.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.11.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.11.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.11.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.11.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.11.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.11.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.11.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.11.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.11.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.12.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.12.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.12.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.12.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.12.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.12.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.12.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.12.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.12.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.13.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.13.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.13.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.13.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.13.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.13.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.13.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.13.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.13.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.14.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.14.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.14.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.14.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.14.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.14.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.14.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.14.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.14.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.15.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.15.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.15.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.15.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.15.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.15.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.15.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.15.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.15.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.16.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.16.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.16.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.16.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.16.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.16.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.16.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.16.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.16.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.17.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.17.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.17.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.17.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.17.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.17.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.17.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.17.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.17.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.18.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.18.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.18.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.18.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.18.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.18.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.18.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.18.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.18.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.19.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.19.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.19.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.19.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.19.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.19.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.19.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.19.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.19.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.20.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.20.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.20.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.20.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.20.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:gguf: loading model part 'pytorch_model-00003-of-00004.bin'

INFO:hf-to-gguf:blk.20.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.20.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.20.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.20.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.21.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.21.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.21.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.21.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.21.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.21.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.21.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.21.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.21.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.22.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.22.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.22.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.22.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.22.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.22.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.22.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.22.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.22.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.23.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.23.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.23.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.23.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.23.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.23.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.23.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.23.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.23.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.24.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.24.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.24.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.24.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.24.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.24.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.24.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.24.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.24.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.25.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.25.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.25.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.25.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.25.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.25.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.25.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.25.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.25.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.26.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.26.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.26.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.26.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.26.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.26.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.26.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.26.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.26.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.27.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.27.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.27.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.27.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.27.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.27.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.27.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.27.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.27.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.28.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.28.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.28.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.28.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.28.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.28.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.28.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.28.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.28.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.29.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.29.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.29.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.29.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.29.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.29.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.29.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.29.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.29.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.30.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.30.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.30.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.30.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.30.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.30.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.30.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.30.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.30.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.31.attn_q.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.31.attn_k.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.31.attn_v.weight, torch.float16 --> Q8_0, shape = {4096, 1024}

INFO:hf-to-gguf:blk.31.attn_output.weight, torch.float16 --> Q8_0, shape = {4096, 4096}

INFO:hf-to-gguf:blk.31.ffn_gate.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:blk.31.ffn_up.weight, torch.float16 --> Q8_0, shape = {4096, 14336}

INFO:hf-to-gguf:gguf: loading model part 'pytorch_model-00004-of-00004.bin'

INFO:hf-to-gguf:blk.31.ffn_down.weight, torch.float16 --> Q8_0, shape = {14336, 4096}

INFO:hf-to-gguf:blk.31.attn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:blk.31.ffn_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:output_norm.weight, torch.float16 --> F32, shape = {4096}

INFO:hf-to-gguf:output.weight, torch.float16 --> Q8_0, shape = {4096, 128256}

INFO:hf-to-gguf:Set meta model

INFO:hf-to-gguf:Set model parameters

INFO:hf-to-gguf:gguf: context length = 8192

INFO:hf-to-gguf:gguf: embedding length = 4096

INFO:hf-to-gguf:gguf: feed forward length = 14336

INFO:hf-to-gguf:gguf: head count = 32

INFO:hf-to-gguf:gguf: key-value head count = 8

INFO:hf-to-gguf:gguf: rope theta = 500000.0

INFO:hf-to-gguf:gguf: rms norm epsilon = 1e-05

INFO:hf-to-gguf:gguf: file type = 7

INFO:hf-to-gguf:Set model tokenizer

INFO:gguf.vocab:Adding 280147 merge(s).

INFO:gguf.vocab:Setting special token type bos to 128000

INFO:gguf.vocab:Setting special token type eos to 128001

INFO:gguf.vocab:Setting special token type pad to 128255

INFO:gguf.vocab:Setting chat_template to {{ 'Below are some instructions that describe some tasks. Write responses that appropriately complete each request.' }}{% for message in messages %}{% if message['role'] == 'user' %}{{ '

### Instruction:

' + message['content'] }}{% elif message['role'] == 'assistant' %}{{ '

### Response:

' + message['content'] + '<|end_of_text|>' }}{% else %}{{ raise_exception('Only user and assistant roles are supported!') }}{% endif %}{% endfor %}{% if add_generation_prompt %}{{ '

### Response:

' }}{% endif %}

INFO:hf-to-gguf:Set model quantization version

INFO:gguf.gguf_writer:Writing the following files:

INFO:gguf.gguf_writer:model/unsloth.Q8_0.gguf: n_tensors = 291, total_size = 8.5G

Writing: 100%|██████████| 8.53G/8.53G [03:05<00:00, 46.1Mbyte/s]

INFO:hf-to-gguf:Model successfully exported to model/unsloth.Q8_0.ggufUnsloth: ##### The current model auto adds a BOS token. Unsloth: ##### We removed it in GGUF's chat template for you.

Unsloth: Conversion completed! Output location: ./model/unsloth.Q8_0.gguf Unsloth: Saved Ollama Modelfile to model/Modelfile

We use subprocess to start Ollama up in a non blocking fashion! In your own desktop, you can simply open up a new terminal and type ollama serve, but in Colab, we have to use this hack!

Ollama needs a Modelfile, which specifies the model's prompt format. Let's print Unsloth's auto generated one:

FROM {__FILE_LOCATION__}

TEMPLATE """Below are some instructions that describe some tasks. Write responses that appropriately complete each request.{{ if .Prompt }}

### Instruction:

{{ .Prompt }}{{ end }}

### Response:

{{ .Response }}<|end_of_text|>"""

PARAMETER stop "<|eot_id|>"

PARAMETER stop "<|start_header_id|>"

PARAMETER stop "<|end_header_id|>"

PARAMETER stop "<|end_of_text|>"

PARAMETER stop "<|reserved_special_token_"

PARAMETER temperature 1.5

PARAMETER min_p 0.1

We now will create an Ollama model called unsloth_model using the Modelfile which we auto generated!

transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠹ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠸ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠴ transferring model data ⠴ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data ⠼ transferring model data ⠦ transferring model data ⠦ transferring model data ⠧ transferring model data ⠏ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠙ transferring model data ⠙ transferring model data ⠹ transferring model data ⠼ transferring model data ⠴ transferring model data ⠦ transferring model data ⠦ transferring model data ⠇ transferring model data ⠇ transferring model data ⠏ transferring model data ⠙ transferring model data ⠙ transferring model data ⠸ transferring model data ⠸ transferring model data ⠼ transferring model data ⠴ transferring model data ⠧ transferring model data ⠇ transferring model data ⠇ transferring model data ⠋ transferring model data ⠋ transferring model data ⠙ transferring model data ⠹ transferring model data ⠸ transferring model data creating new layer sha256:94728011329d3d304c40e235f81f1b75580e163036c07d98382dc5548d555a34 creating new layer sha256:95b5361453780fb5797ce5abfe9a330f5d33fdec13d2232ef1443ee0c3a86ecc creating new layer sha256:57675488fe3dd2a75da06ae97984c4ce6f382208e9d989c584b22ee395bab0d8 creating new layer sha256:e706dd26476841ded603017f70f5b99b5be356caa859878787bfc3898d547f08 writing manifest success

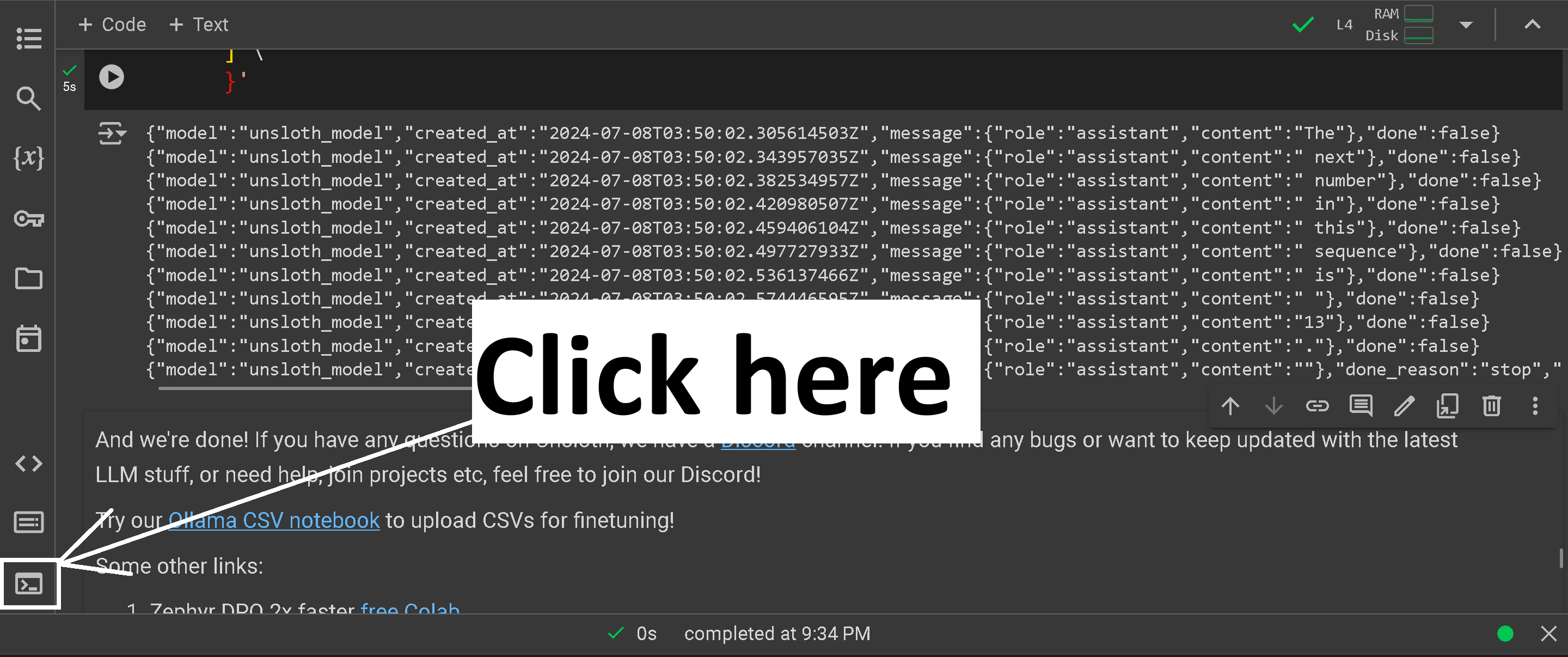

And now we can do inference on it via Ollama!

You can also upload to Ollama and try the Ollama Desktop app by heading to https://www.ollama.com/

{"model":"unsloth_model","created_at":"2024-10-01T06:47:04.241326628Z","message":{"role":"assistant","content":"The"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:04.465575479Z","message":{"role":"assistant","content":" next"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:04.760101468Z","message":{"role":"assistant","content":" number"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.051240606Z","message":{"role":"assistant","content":" in"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.376545126Z","message":{"role":"assistant","content":" the"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.515751946Z","message":{"role":"assistant","content":" Fibonacci"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.658721744Z","message":{"role":"assistant","content":" sequence"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.795226527Z","message":{"role":"assistant","content":" after"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:05.923676364Z","message":{"role":"assistant","content":" "},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.053599585Z","message":{"role":"assistant","content":"8"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.187220374Z","message":{"role":"assistant","content":" is"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.316237671Z","message":{"role":"assistant","content":" "},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.448901764Z","message":{"role":"assistant","content":"13"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.585864644Z","message":{"role":"assistant","content":" ("},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.712030586Z","message":{"role":"assistant","content":"the"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.835728964Z","message":{"role":"assistant","content":" sum"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:06.962898827Z","message":{"role":"assistant","content":" of"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.088064406Z","message":{"role":"assistant","content":" the"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.212942126Z","message":{"role":"assistant","content":" previous"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.336569966Z","message":{"role":"assistant","content":" two"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.46094096Z","message":{"role":"assistant","content":" numbers"},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.593857726Z","message":{"role":"assistant","content":")."},"done":false}

{"model":"unsloth_model","created_at":"2024-10-01T06:47:07.741203726Z","message":{"role":"assistant","content":""},"done_reason":"stop","done":true,"total_duration":3741960321,"load_duration":48967410,"prompt_eval_count":47,"prompt_eval_duration":150430000,"eval_count":23,"eval_duration":3499634000}

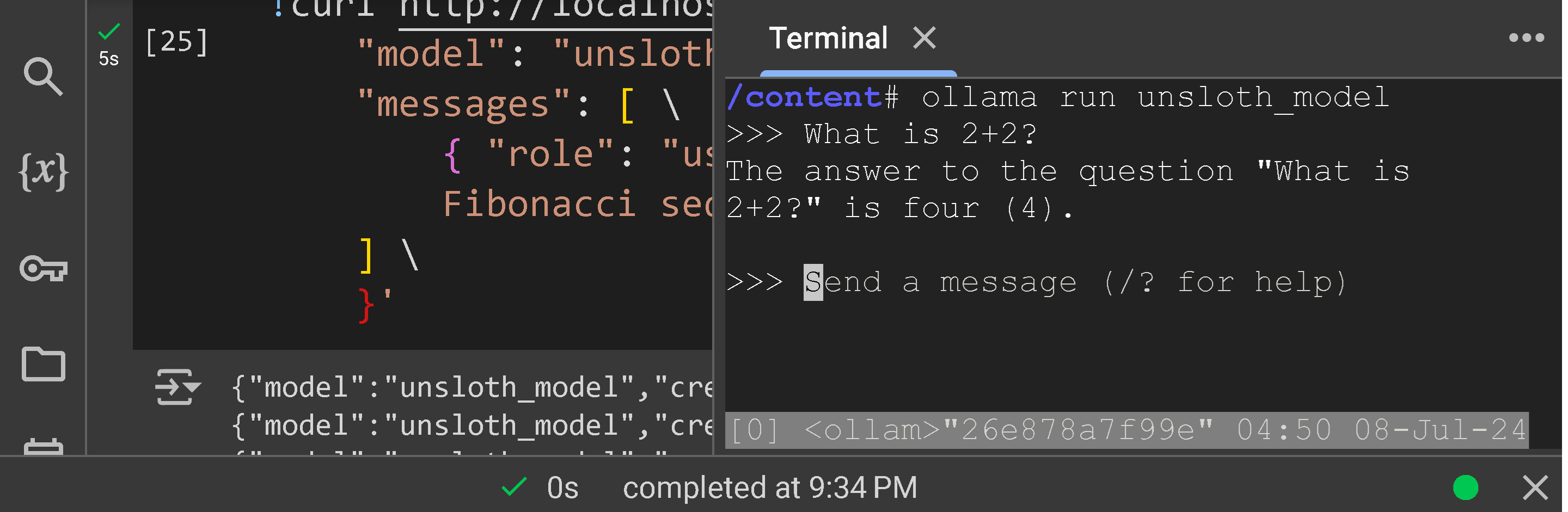

ChatGPT interactive mode

⭐ To run the finetuned model like in a ChatGPT style interface, first click the | >_ | button.

⭐ Then, type ollama run unsloth_model

⭐ And you have a ChatGPT style assistant!

Type any question you like and press ENTER. If you want to exit, hit CTRL + D

And we're done! If you have any questions on Unsloth, we have a Discord channel! If you find any bugs or want to keep updated with the latest LLM stuff, or need help, join projects etc, feel free to join our Discord!

And we're done! If you have any questions on Unsloth, we have a Discord channel! If you find any bugs or want to keep updated with the latest LLM stuff, or need help, join projects etc, feel free to join our Discord!

Some other resources:

- Looking to use Unsloth locally? Read our Installation Guide for details on installing Unsloth on Windows, Docker, AMD, Intel GPUs.

- Learn how to do Reinforcement Learning with our RL Guide and notebooks.

- Read our guides and notebooks for Text-to-speech (TTS) and vision model support.

- Explore our LLM Tutorials Directory to find dedicated guides for each model.

- Need help with Inference? Read our Inference & Deployment page for details on using vLLM, llama.cpp, Ollama etc.